As a memorandum for a university assignment I implemented: I created a neural network from scratch in C++ to classify MNIST handwritten digits, implementing everything from matrix operations to backpropagation without using any deep learning frameworks.

repo: https://github.com/romophic/MNIST_cpp

1. Principles

I will explain how data is processed within the constructed neural network.

1.1 Flow from Input to Output

A neural network is made up of multiple stacked layers. Data flows in one direction from the input layer to the hidden layers, and finally to the output layer. This is called forward propagation.

When data is transmitted from one layer to the next, the strength of each signal is modified by weights. If we let the input data be , the signal transmitted to the next layer be , and the matrix consolidating the weights be , this relationship can be expressed simply as a matrix product:

This equation means that signals from all neurons in the previous layer are multiplied by their respective weights and gathered at the neurons in the next layer. The gathered signal is not sent to the next layer as is, but passes through a filter called an activation function. This determines the neuron’s firing (whether to transmit the signal to the next node). In this experiment, two types of functions were used.

For the hidden layer, the ReLU function was used. This outputs 0 if the input is negative, and outputs the value as is if it is positive.

This allows filtering out unnecessary information and transmitting only the important features to the next layer. Because the calculation is extremely simple, it has the advantage of speeding up the learning process.

For the final output layer, the Sigmoid function was used. This converts any given value into a number between and .

Since the output falls between and , it can be interpreted as the probability of the digit being a specific number.

1.2 Learning Mechanism (Backpropagation)

Initially, the weights are set to random values, so the network will not produce the correct answers. Therefore, we calculate the error between the network’s answer and the training data. To minimize this error, we incrementally adjust the weights backward from the output layer to the input layer. By repeating this process, the neural network gradually becomes capable of making correct judgments.

2. Methodology

The implementation utilizes C++23 with Clang version 21.1.7 as the compiler. The Eigen library was used for matrix calculations. The details of the model constructed in this experiment are as follows:

- Input layer: 784 nodes (corresponding to pixel image data)

- Hidden layer: 128 nodes (Activation function: ReLU)

- Output layer: 10 nodes (Activation function: Sigmoid, corresponding to digits 0-9)

To ensure smooth learning, the initial values of the weights must be carefully determined. He initialization was used for the hidden layer weights, and Xavier initialization was used for the output layer weights. These methods set random numbers to preserve the variance of the data, which prevents the learning process from stalling early on.

2.1 Learning Algorithm

The source code for the learning component is explained in stages. Below is an overview of the implementation of the class NeuralNetwork, which handles the neural network.

Listing 1: NeuralNetwork Class Implementation (Overview)

class NeuralNetwork { private: Eigen::MatrixXd w_ih; // Input layer -> Hidden layer weights Eigen::MatrixXd w_ho; // Hidden layer -> Output layer weights Eigen::VectorXd hidden_outputs; // Hidden layer outputs

public: // Initialization NeuralNetwork(); // Perform forward propagation Eigen::VectorXd query(const Eigen::VectorXd& _inputs); // Train void train(const Eigen::VectorXd& _inputs, const Eigen::VectorXd& _targets) {}};It holds the weight matrices for the input, hidden, and output layers as variables. Next, the content of NeuralNetwork responsible for initialization is shown below.

Listing 2: Initialization Implementation

NeuralNetwork() { // Hidden layer double weight_scale_ih = sqrt(2.0 / INPUT_NODES); // He initialization coefficient w_ih = Eigen::MatrixXd::Random(HIDDEN_NODES, INPUT_NODES) * weight_scale_ih; // He initialization // Output layer double weight_scale_ho = sqrt(1.0 / HIDDEN_NODES); // Xavier initialization coefficient w_ho = Eigen::MatrixXd::Random(OUTPUT_NODES, HIDDEN_NODES) * weight_scale_ho; // Xavier initialization}The contents of the function query, which performs forward propagation, are shown below.

Listing 3: Forward Propagation Implementation

// Perform forward propagationEigen::VectorXd query(const Eigen::VectorXd& _inputs) { // Hidden layer Eigen::VectorXd hidden_inputs = w_ih * _inputs; // The result of multiplying the weight matrix and the input matrix is the output hidden_outputs = hidden_inputs.unaryExpr(&relu); // Apply ReLU to the output

// Output layer Eigen::VectorXd final_inputs = w_ho * hidden_outputs; // The result of multiplying the weight matrix and the hidden layer output matrix is the output Eigen::VectorXd final_outputs = final_inputs.unaryExpr(&sigmoid); // Apply Sigmoid to the output return final_outputs;}Finally, the implementation of train, the section where learning occurs, is explained.

Listing 4: Learning (Backpropagation) Implementation

// Learningvoid train(const Eigen::VectorXd& _inputs, const Eigen::VectorXd& _targets) { Eigen::VectorXd final_outputs = query(_inputs); // Forward propagation

Eigen::VectorXd output_errors = _targets - final_outputs; // Error calculation Eigen::VectorXd hidden_errors = w_ho.transpose() * output_errors; // Hidden layer error calculation

// Output layer gradients Eigen::VectorXd output_gradients = output_errors.cwiseProduct(final_outputs.unaryExpr(&sigmoid_d)); w_ho += LEARNING_RATE * (output_gradients * hidden_outputs.transpose()); // Update output layer weights

// Hidden layer gradients Eigen::VectorXd hidden_gradients = hidden_errors.cwiseProduct(hidden_outputs.unaryExpr(&relu_d)); w_ih += LEARNING_RATE * (hidden_gradients * _inputs.transpose()); // Update hidden layer weights}2.2 Loading the MNIST Dataset

The MNIST dataset consists of the following 4 files. Since they are recorded in big-endian format, they must be read 4 bytes at a time, reversing the byte order to reconstruct the numerical values.

train-images-idx3-ubyte: Training image datatrain-labels-idx1-ubyte: Training label datat10k-images-idx3-ubyte: Test image datat10k-labels-idx1-ubyte: Test label data

The actual image data follows the header, with each pixel stored as a value from 0 to 255. Upon reading, all pixel values were divided by 255 to normalize them before being used as input data.

2.3 Source Code

The full source code used in the experiment is provided below.

Listing 5: main.cpp (Full text)

#include <algorithm>#include <cmath>#include <cstdlib>#include <fstream>#include <iostream>#include <vector>

#include "Eigen/Core"#include "Eigen/Dense"

using namespace std;

constexpr int INPUT_NODES = 784; // 入力層のノード数constexpr int HIDDEN_NODES = 128; // 隠れ層のノード数constexpr int OUTPUT_NODES = 10; // 出力層のノード数constexpr double LEARNING_RATE = 0.01; // 学習率constexpr int EPOCHS = 10; // エポック数

double sigmoid(double _x) { return 1.0 / (1.0 + exp(-_x)); } // sigmoid関数double sigmoid_d(double _x) { return _x * (1.0 - _x); } // sigmoidの微分

double relu(double _x) { return max(0.0, _x); } // relu関数double relu_d(double _y) { return _y > 0.0 ? 1.0 : 0.0; } // reluの微分

class NeuralNetwork { private: Eigen::MatrixXd w_ih; // 入力層 -> 隠れ層の重み Eigen::MatrixXd w_ho; // 隠れ層 -> 出力層の重み Eigen::VectorXd hidden_outputs; // 隠れ層の出力

public: // 初期化 NeuralNetwork() { // 隠れ層 double weight_scale_ih = sqrt(2.0 / INPUT_NODES); // He初期化係数 w_ih = Eigen::MatrixXd::Random(HIDDEN_NODES, INPUT_NODES) * weight_scale_ih; // He初期化

// 出力層 double weight_scale_ho = sqrt(1.0 / HIDDEN_NODES); // Xavier初期化係数 w_ho = Eigen::MatrixXd::Random(OUTPUT_NODES, HIDDEN_NODES) * weight_scale_ho; // Xavier初期化 }

// 順伝播を行う Eigen::VectorXd query(const Eigen::VectorXd& _inputs) { // 隠れ層 Eigen::VectorXd hidden_inputs = w_ih * _inputs; // 重み行列と入力行列を掛けた結果を出力とする hidden_outputs = hidden_inputs.unaryExpr(&relu); // 出力にReluを適応する

// 出力層 Eigen::VectorXd final_inputs = w_ho * hidden_outputs; // 重み行列と隠れ層の出力行列を掛けた結果を出力とする Eigen::VectorXd final_outputs = final_inputs.unaryExpr(&sigmoid); // 出力にSigmoidを適応する return final_outputs; }

// 学習 void train(const Eigen::VectorXd& _inputs, const Eigen::VectorXd& _targets) { Eigen::VectorXd final_outputs = query(_inputs); // 順伝搬

Eigen::VectorXd output_errors = _targets - final_outputs; // 誤差計算 Eigen::VectorXd hidden_errors = w_ho.transpose() * output_errors; // 隠れ層の誤差計算

// 出力層の勾配 Eigen::VectorXd output_gradients = output_errors.cwiseProduct(final_outputs.unaryExpr(&sigmoid_d)); w_ho += LEARNING_RATE * (output_gradients * hidden_outputs.transpose()); // 出力層の重み更新

// 隠れ層の勾配 Eigen::VectorXd hidden_gradients = hidden_errors.cwiseProduct(hidden_outputs.unaryExpr(&relu_d)); w_ih += LEARNING_RATE * (hidden_gradients * _inputs.transpose()); // 隠れ層の重み更新 }};

int read_int(ifstream& file) { unsigned char bytes[4]; file.read((char*)bytes, 4); return (bytes[0] << 24) | (bytes[1] << 16) | (bytes[2] << 8) | bytes[3];}

void load_mnist(const string& _image_path, const string& _label_path, vector<Eigen::VectorXd>& _images, vector<int>& _labels) { ifstream img_file(_image_path, ios::binary); ifstream lbl_file(_label_path, ios::binary);

if (not(img_file.is_open() and lbl_file.is_open())) exit(1);

read_int(img_file);

int num_items = read_int(img_file); int rows = read_int(img_file); int cols = read_int(img_file);

cout << "num_items: " << num_items << endl; cout << "rows: " << rows << endl; cout << "cols: " << cols << endl;

read_int(lbl_file); read_int(lbl_file);

_images.reserve(num_items); _labels.resize(num_items);

for (int i = 0; i < num_items; ++i) { unsigned char label; lbl_file.read((char*)&label, 1); _labels[i] = (int)label; Eigen::VectorXd img_vec(rows * cols); for (int j = 0; j < rows * cols; ++j) { unsigned char pixel; img_file.read((char*)&pixel, 1); img_vec[j] = pixel / 255.0; } _images.emplace_back(img_vec); }}

int main() { vector<Eigen::VectorXd> train_images, test_images; vector<int> train_labels, test_labels;

load_mnist("train-images.idx3-ubyte", "train-labels.idx1-ubyte", train_images, train_labels); load_mnist("t10k-images.idx3-ubyte", "t10k-labels.idx1-ubyte", test_images, test_labels);

NeuralNetwork nn;

for (int epoch = 1; epoch <= EPOCHS; ++epoch) { for (size_t i = 0; i < train_images.size(); ++i) { Eigen::VectorXd targets = Eigen::VectorXd::Constant(OUTPUT_NODES, 0.01); targets[train_labels[i]] = 0.99; nn.train(train_images[i], targets); } cout << "Epoch " << epoch << " done" << endl; }

int correct_count = 0; for (size_t i = 0; i < test_images.size(); ++i) { Eigen::VectorXd outputs = nn.query(test_images[i]); int predicted_label; outputs.maxCoeff(&predicted_label); if (predicted_label == test_labels[i]) correct_count++; }

double accuracy = (double)correct_count / test_images.size() * 100.0; cout << "Accuracy: " << accuracy << "%" << endl;

return 0;}Compilation Command:

clang++ -std=c++23 -O3 -march=native main.cpp3. Results

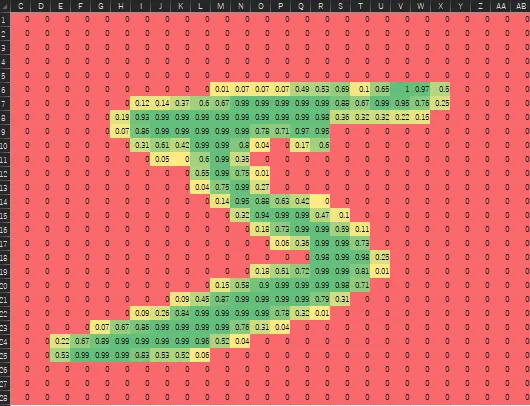

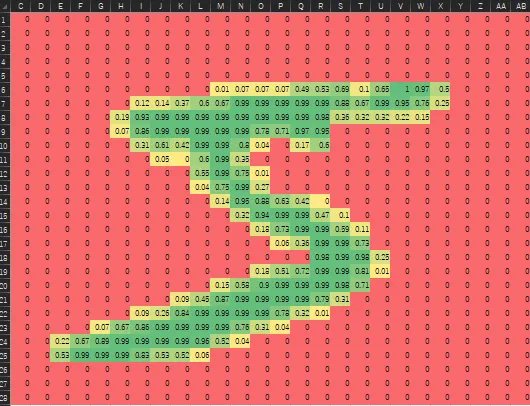

Using 60,000 training images, learning was conducted for 10 epochs, and the accuracy was measured with 10,000 test images. The recognition accuracy was approximately 97.53%, confirming a high level of precision.

4. Discussion

- Activation Function: Using ReLU in the hidden layer prevented the vanishing gradient problem, achieving efficient learning.

- Learning Rate: A setting of 0.01 proved appropriate for both stability and convergence speed.